The Rise of Claude Code Web Agents

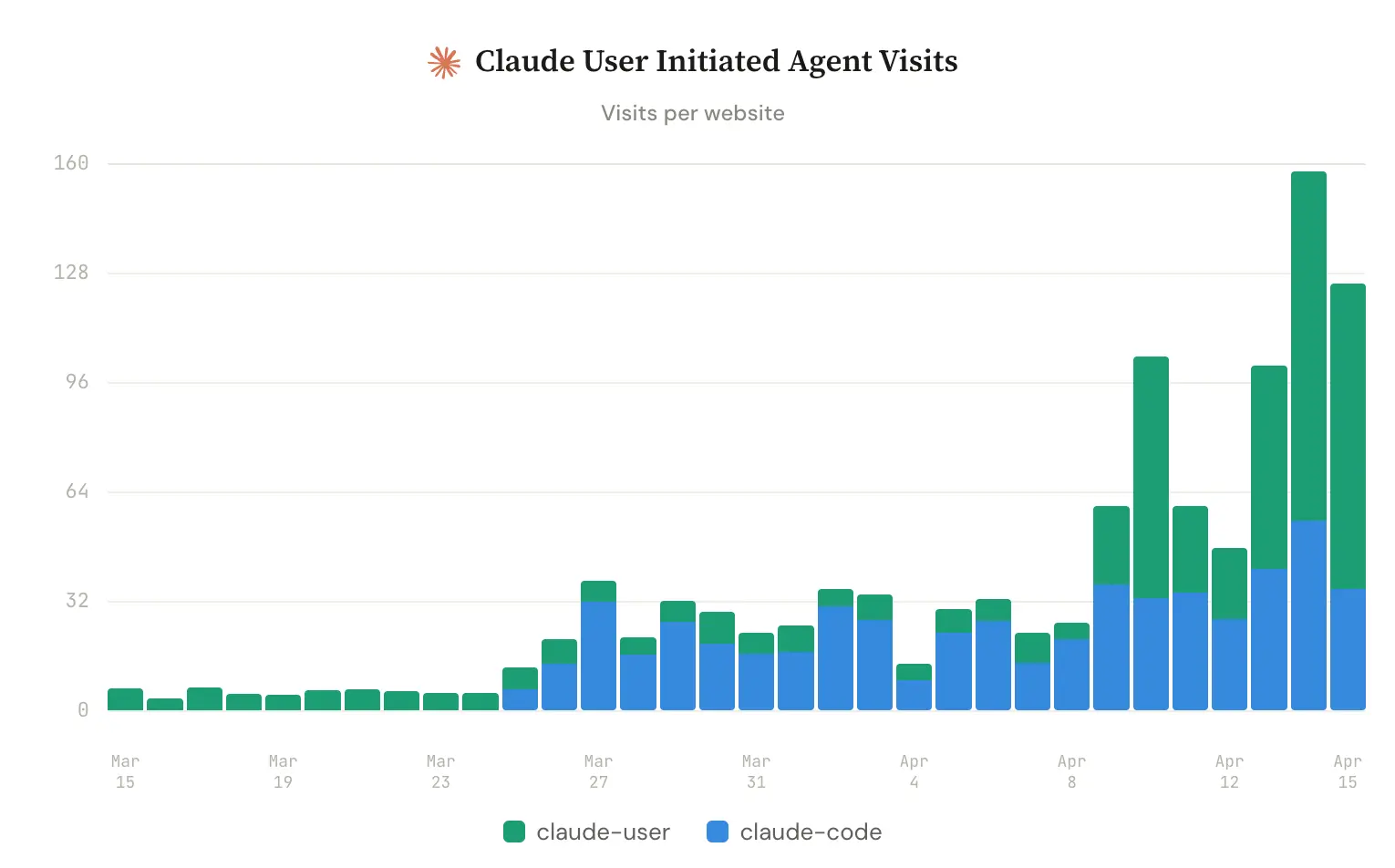

Agent visits are growing fast

2026 has been the year Claude Code went mainstream. At Siteline we process 3M+ requests per day from AI agents visiting company websites, so we've been tracking this evolution first hand.

At the start of the year, sites only saw a handful of visits from Claude's "user-initiated" agent (triggered when a user is prompting in Claude, not its background crawler). Since March that's nearly 8x'd to 60 visits per day for the average site. Anthropic introduced a distinct Claude Code user agent in late March, and it has already doubled to 40+ visits per site per day.

What are Claude agents visiting?

When we categorize the pages each bot hits, the differences are stark. Claude agents dive heavily into documentation while ChatGPT agents focus on blog and marketing content. 64% of Claude Code visits go to docs vs. 23% for ChatGPT-User. On the flip side, 43% of ChatGPT-User visits hit the blog vs. 12% for Claude Code.

The table below normalizes visits as a share of each bot's total traffic, since raw volumes differ significantly between bots.

| Category | chatgpt-user | claude-user | claude-code |

|---|

This makes sense when you think about what each agent is actually doing. A user prompting ChatGPT is often researching a topic or comparing options, so the agent reads blog posts and marketing pages. A Claude Code user is usually trying to build something: they have a goal for their app and need to figure out how to get there.

Discovery, integration, activation

Looking at the specific paths Claude Code visits, we can see a clear progressions. First, discovery: the agent hits documentation landing pages and API references to understand what a service offers and whether it fits the user's needs. It's doing the research a developer used to do manually, scanning docs to evaluate whether a tool solves their problem.

Next, integration. Claude Code frequently requests MCP configuration files, /.well-known endpoints, and agent-install.txt files. These are the machine-readable instructions that let an agent connect to a service programmatically. In our customers request data, MCP-related paths are among the most visited by Claude agents.

Finally, activation. We see Claude Code visiting getting-started guides, setup pages, pricing pages, and billing documentation. This is the agent trying to put everything together: install the SDK, configure credentials, understand the pricing tier, and get the user up and running.

Is Claude Code finding what it needs?

Not always. We analyzed the raw request logs to see where each bot runs into friction.

Claude Code has the highest error rate at 13.1%, with nearly 30% of its documentation requests returning 404. The pattern is consistent: it constructs plausible URLs like /docs/integrations and /docs/api that don't exist on the target site. It's guessing where docs live based on common conventions rather than following links or reading a sitemap. Easy fix: add a sitemap or a top-level /docs index page.

Claude Code is also 2x slower at the median than the other bots (181ms vs. 82-87ms). Part of this is heavier doc pages, but failed requests are actually slower than successful ones (325ms vs. 257ms for Claude Code's 4xx vs. 2xx responses), which suggests servers are doing real work before returning error pages rather than short-circuiting early.

What this means for B2B software that has a technical audience

Since last year, GEO and AEO efforts have focused on getting AI to choose your product at the top of funnel. But the data here tells a different story. Even after a customer has decided to use your product, agents need to efficiently handle installation and activation. That's where a lot of drop off happens, well before conversion and payment.

Nobody likes reading dev docs. Claude Code is doing it for them now, and it needs the input to be discoverable, concise and up to date.