How Websites Will Need to Adapt for Their New Agentic Visitors

Liz Reid, head of Google Search, recently said something worth paying attention to: "There will be a world in which agents are doing a lot of the interaction on the internet... and are talking with each other, not just with humans."

We're still a ways away from that reality. But I don't think it's farfetched, and it raises a question that I think most businesses haven't seriously considered yet: how will websites prepare for visitors that aren't human?

The web has a compatibility problem

Think about what happens when an AI agent tries to interact with a typical modern website today.

It hits a JavaScript-rendered single-page app that needs full browser execution to see any content. There's a cookie consent banner blocking the page. A CAPTCHA. Infinite scroll with no pagination. Forms that require visual context to understand. Navigation that depends on hover states and animations.

All of this was designed for a human sitting in front of a screen with a mouse. For an AI agent trying to understand what your site offers, find specific information, or complete a task on behalf of a user, it's a wall.

We see this playing out in server logs every day at Siteline. Agent traffic is already a meaningful share of total web traffic for many sites, and it's growing fast. But most of the web still optimizes exclusively for human visual experience at the expense of machine readability.

How agents actually interact with websites today

AI agents interact with sites through a few different approaches, and which one they use depends on what's available to them.

- The most common is simply reading and crawling. Agents fetch pages over HTTP, parse content, and follow links, similar to traditional crawlers. The difference is they have semantic understanding. They're not just indexing keywords; they can summarize, compare, and execute multi-step tasks using your content.

- When structured data is available (schema.org JSON-LD markup for products, prices, availability, FAQs, entity relationships), agents lean on it heavily. This is far more reliable for them than trying to extract the same information from arbitrary page layouts.

And where none of that exists, agents fall back to browser automation: navigating your UI programmatically, clicking buttons, filling forms. This works but it's brittle, slow, and breaks constantly when you update your front end.

Practical steps you can take today

You don't need to rebuild your site. The path to agent readiness builds on foundations you likely already have, and honestly, most of this is just good SEO practice with a new lens.

Start with structured data. Implement rich schema.org JSON-LD for your core entities: products, services, locations, FAQs, support flows. This is likely the single highest-leverage change you can make, because it lets any agent consume your content directly instead of trying to parse your visual layout. If you're already doing structured data for traditional search, you're ahead of most.

Make content comprehensive and well-structured. Topic-level, answer-complete pages with clear hierarchy and internal linking. Agents synthesize answers from your content, so if it's fragmented across dozens of thin pages, they can't construct a reliable picture of what you offer. This has always been good content strategy; it just matters even more now.

Server-side rendered content, fast load times, crawlable pages, clean URLs. Same stuff that helps traditional SEO, but now also the baseline for agent crawlers. If your content requires full JavaScript execution to be visible, a significant and growing share of your visitors literally can't see it.

Beyond the fundamentals: the emerging technologies

The fundamentals above will get you most of the way there. But there's a more forward-looking layer of technology emerging that's worth understanding, especially if you run a complex web application or expect agents to eventually transact on your site.

There's no single universal standard yet, but several practical approaches with real institutional backing are gaining traction. A few are worth watching closely, and a few are already live 👀

Cloudflare: markdown conversion and scraping API

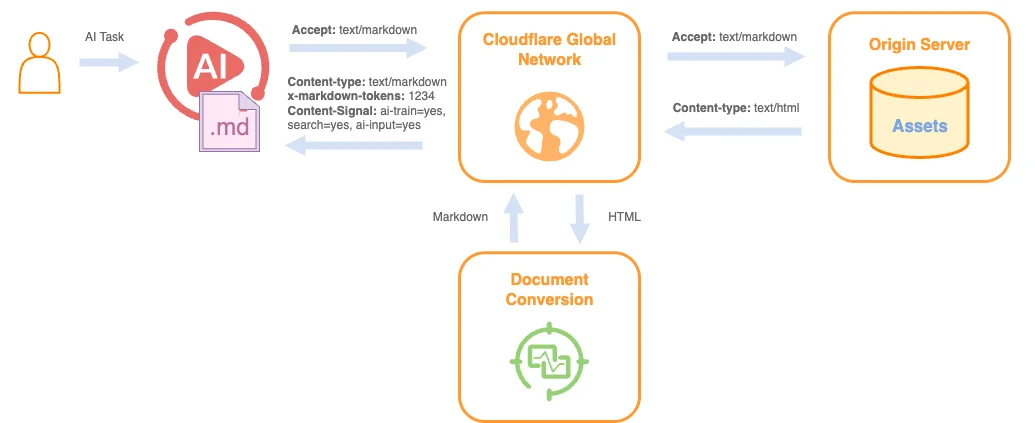

Cloudflare has been one of the most active players in making the web agent-friendly. In February 2026, they launched Markdown for Agents: a feature that automatically converts HTML pages to markdown at the edge when AI agents request them.

The conversion serves to reduce the data the agent needs to ingest. The same blog post that takes 16,180 tokens in HTML drops to 3,150 in markdown -- an 80% reduction. For agents processing hundreds of pages per query (which is increasingly common in RAG systems), this changes the economics entirely.

The way it works is through standard HTTP content negotiation. When an agent requests a page with a markdown accept header, Cloudflare's edge network intercepts the response and returns clean markdown. The origin server doesn't need to change anything. They've also included content-signal headers that let site owners declare how their content can be used by AI systems (for training, search, inference, etc.).

Cloudflare also recently launched a crawling API that gives agents a structured way to access site content programmatically, rather than relying on traditional crawling.

If you're already on Cloudflare, these are probably the most accessible entry points for making your site agent-friendly. Minimal to no code changes required.

WebMCP: turning your website into a tool surface

This one is earlier-stage but has the most interesting long-term implications.

WebMCP from Google Crome is currently in early preview is co-authored by engineers at Google and Microsoft. The core idea: instead of agents scraping your DOM and guessing what buttons do, your web page declares what actions are available as structured "tools" that agents can call directly through the browser. No pre-integration required, unlike traditional MCP setups that need a backend server.

So instead of an agent trying to figure out which button to click and which form to fill, your page declares high-level actions like "search products," "book reservation," or "check order status." Agents call these tools through the browser, which handles execution and authentication via existing session cookies.

The developer experience is surprisingly simple. You can add a few attributes to existing HTML forms (details) and the browser converts them into tools automatically. Or you can register tools in JavaScript with descriptions and input schemas. Either way, you're essentially building a mini-API surface directly in the front end.

As VentureBeat reported, one of the Google engineers involved described WebMCP as aiming to become "the USB-C of AI agent interactions with the web." That's an ambitious framing, but the fact that both Google and Microsoft are co-authoring the spec suggests this has real momentum. Native browser support across Chrome and Edge is expected in the fall.

Identity and policy: Web Bot Auth and AIPREF

A parallel set of standards is forming around how agents identify themselves and how sites govern agent access. This area has seen some speculative proposals over the past year (llms.txt being a notable example that didn't gain traction), but the efforts that have serious institutional backing are worth paying attention to.

Web Bot Auth is a formal IETF working group with backing from Cloudflare, Akamai, Vercel, and Stytch. It uses cryptographic signatures so agents can prove their identity through a public-key registry. This isn't theoretical: OpenAI's ChatGPT agent is already signing its requests using Web Bot Auth. Vercel's bot verification supports it. Akamai has built verification into their bot management products.

On the policy side, AIPREF is another IETF working group, with drafts from engineers at Google and Mozil, that's building the next-generation robots.txt for AI. Instead of the current patchwork of non-standard signals that different AI companies may or may not respect, AIPREF is defining a common vocabulary for expressing preferences about how content is used for training, search, and inference.

The model that's taking shape has three layers: a policy layer (what agents can do), an identity layer (who's asking), and an enforcement layer (checking requests against your policies and logging outcomes). Cloudflare's content-signal headers are already aligned with this direction, which is a good sign for near-term adoption.

Agent-to-agent interoperability

Further out, there's also work happening at the framework level on how agents describe their own capabilities to each other. Efforts like AGNTCY, Open Agent Schema, and ACP are working to standardize how agents communicate across different frameworks (LangChain, AutoGen, etc.). As these mature, expect patterns where your website or API is described in a common schema that many agents can understand, similar to how OpenAPI works for REST today. This is still pretty early, but worth keeping on your radar.

Looking ahead

The web's last major platform shift was desktop to mobile, and the businesses that treated mobile as an afterthought paid for it over time. The next shift is from human-only to human-plus-agent, and the dynamics feel similar.

When Liz Reid talks about agents interacting with the internet, she's describing a near-term future where a meaningful share of your "visitors" are agents acting on behalf of people: researching products, comparing options, booking services, completing transactions. Your site either works for them, or it doesn't exist in their world.

The good news is that most of the practical steps are incremental and build on things that already make your site better for humans and search engines too. Strong SEO fundamentals, clean structured data, well-organized content, good technical health -- these aren't just traditional best practices anymore. They're the foundation of agent accessibility.

The businesses that start now will have a real advantage as agent traffic continues to grow. And the emerging standards coming from Cloudflare, Chrome, the IETF, and others suggest that the tooling to make this easier is arriving faster than most people expect.